A Guide to Securing Google Kubernetes Engine

It is no secret that organizations are striving to move their applications into containers. According to Mordor Intelligence, the application container market is expected to grow over 29% within the next five years with Kubernetes achieving a growth of 48%. Companies such as Google and Amazon are at the forefront of enabling this effort offering customers managed Kubernetes services. With this large growth in the Kubernetes space, security needs to be top of mind.

In this blog, we explore how to secure Google Kubernetes Engine (GKE) on Google Cloud. Note that this is more of a survey of security best practices for GKE. For a more in-depth understanding follow the links within this article or give us a shout!

Keep IAM in Check

It can be argued that security of a GKE cluster starts with Identity and Access Management (IAM). Having proper IAM in place ensures easier administration of the cluster as well as increases security posture by limiting the blast radius that a compromised account can have. The best place to start with IAM policies in your GKE cluster is to ensure you are following the principle of least privilege.

Principle of Least Privilege

This principle can be summarized as only assigning permissions to perform work that needs to be done and no more. Roles should be granted at the smallest level possible. Consider using predefined IAM roles which have a reasonable set of permissions associated with them, and then paring them down with recommendations given by tools such as the IAM recommender. In addition, using IAM conditions to only grant access to identities for a limited period of time can greatly accelerate restrictive access.

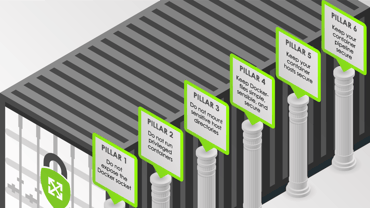

Don’t Use the Default Service Account

Unfortunately, security by default falls short when it comes to compute nodes in GCP. This is exemplified by examining the default permissions a compute engine virtual machine has - e.g. its service account that is automatically assigned by default. Using the default service account should be avoided, since by default the Compute Engine service account contains the overly permissive project editor (roles/editor) role. Instead, create a minimally privileged service account to be associated with your GKE cluster nodes. A great preventative measure is to utilize an organization policy restriction (iam.automaticIamGrantsForDefaultServiceAccounts) to disable the automatic grant of the roles/editor role for all projects.

Role Based Access Control (RBAC)

IAM and RBAC work hand-in-hand when it comes to GKE due to the high coupling that they have by design. Any identity that wants to perform an action to a Kubernetes resource must have sufficient permissions at both the IAM and RBAC level to perform the action. It is generally recommended to assign IAM roles for GKE using groups (which can contain multiple members) and use RBAC to grant permissions on cluster and namespace levels. This is important when enforcing workload boundaries, which we delve into further in the next section.

Attribute Based Access Control (ABAC)

Before we discuss workload boundaries, it is worthwhile to explore ABAC on Kubernetes. Many cloud practitioners may feel naturally inclined to use ABAC since intuitively privileges can be fine grained and based on attributes rather than relying on RBAC policies. ABAC is a powerful strategy for IAM that is touted as superior (rightly so) in other clouds and outside the context of Kubernetes. However, due to the implementation of ABAC on Kubernetes (not just GKE), the Kubernetes working group has considered ABAC deprecated since 2017. By default, GKE 1.8+ disables ABAC (also known as Legacy Authorization) and this setting should stay disabled for a properly secured cluster.

Properly Enforce Workload Boundaries

Along similar lines of IAM, the separation of tenants is also important in limiting the impact compromise can have, thus reducing risk. A tenant can be a particular environment such as development, testing, or production. A tenant could also be a team, a user, a customer, or any other group that should be separated from each other from a security perspective. Kubernetes offers some mechanisms by default to perform this segregation, and GKE can take it a step further by using a sandbox.

Namespaces

Namespaces is a native way to perform tenant separation on Kubernetes. Following a tenant-per-namespace model is a common pattern and effectively reduces blast radius and risk of data leakage between different tenants within the same Kubernetes cluster. Access between namespaces can be restricted using Kubernetes Policies which can define limits, quotas, and pod security policies.

GKE Sandbox

When running workloads that may not be trusted yet, GKE Sandbox can provide an extra layer of security that provides another layer of isolation and protects the underlying host kernel from any potential security issues. It is recommended to use GKE Sandbox only on untrusted workloads in lower non-production environments such as sandbox environments and development environments. This shouldn’t be used in production under normal circumstances.

Restrict Network Access

Having a properly secure IAM boundary is one part of a healthy and secure GKE cluster. Almost as important is being able to secure the network boundary of GKE as well. With Kubernetes, network access can be restricted on several levels including: communication with the control plane, communication between nodes, and communication between pods. We will briefly go over each in the next several subsections.

Protect the Control Plane with Private Clusters

A Kubernetes control plane manages nodes and pods within the Kubernetes cluster. Since it is tasked with some of the most important tasks that Kubernetes performs (such as scheduling pods and autoscaling nodes) it goes without saying that the components of the control plane should be as isolated from the internet as possible. Creating a private cluster helps to reduce the attack surface of the control plane by disabling or restricting endpoint access to the Kubernetes control plane to authorized networks only.

Use Authorized Networks and Private Nodes

Creating a private cluster helps to secure nodes as well. Enabling a GKE private cluster means that nodes only have internal IP addresses, which isolates them from the internet by default. To ensure that you have private nodes when setting up a cluster ensure that the tooloption --enable-private-nodes is specified when creating a cluster. If using Terraform, ensure that a private_cluster_config is specified for the google_container_cluster resource.

Consider Network Policies and Service Mesh

Pods consist of one or more containers that run various workloads on the cluster. Once the control plane and nodes are properly configured with IAM and network controls, restricting pod-to-pod communication as needed for workloads is a good way to increase your security posture. This is easy to do with Kubernetes Network Policies which can be set in GKE. For more advanced pod-to-pod network scenarios such as circuit breaking, service authorization, quotas and throttling, consider using a service mesh such as Istio which can be included as a GKE cluster add-on.

Harden Your Nodes

Nodes are the virtual machines that containers run on top of within a Kubernetes cluster. Protecting these are an important part of a defense in depth strategy with GKE. Staying on top of updates and having a secure configuration is possible with some helpful options that can be specified in a GKE cluster.

Stay Up to Date

Threats to GKE are consistently being discovered. Zero days and other vulnerabilities can compromise your cluster leading to a breach in confidentiality, integrity, and/or availability of your services. In order to stay on top of patches and fixes for threats such as this, it is important to keep your nodes up to date. Luckily, GKE stays up to date automatically via node auto-upgrade. It is recommended to keep this option enabled.

Shielded VMs

Shielded VMs are hardened compute environments that defend against rootkits and other malware - for more information check out our blog outlining Shielded VMs and integrity monitoring. To enable Shielded GKE nodes, specify the --enable-shielded-nodes option when creating or updating nodes within the cluster. Optionally, use the --shielded-secure-boot flag at cluster creation to enable secure boot to check for any unsigned (potentially unsafe) kernel-level modules.

GCP Shielded VM — Integrity Monitoring

Protect Your Data

Not all data is created equal. There’s some data that is more sensitive than others and warrants higher levels of security controls. In addition, your GKE cluster may contain data that gives access to even more data - a secret. Secrets can be but are not limited to: certificates, API keys, and passwords. Having a grasp on what data needs to be protected is the first step in data protection, and then managing the protection of the data can be performed using the methods outlined below.

Manage Your Secrets

Let’s face it - we all have secrets. Applications and workloads running on Kubernetes is no exception to this. In order to prevent the dilemma of “secret sprawl”, it is imperative to centralize all application secrets into a secrets management solution as soon as possible. For those that need help selecting a solution for GCP, we have performed a comparison of secret managers that explains the differences between leading solutions in this area. Once a secrets manager is selected, it should be integrated with GKE clusters via the use of a Kubernetes or Google Cloud service account. For those who wish to not have a secrets manager external to the GKE cluster, using native Kubernetes secrets in GKE is possible and should be encrypted at the application layer using a Cloud Key Management Service (Cloud KMS) key.

A Comparison of Secrets Managers for GCP

Keys to the Data

Protecting your data at rest is performed automatically since Google encrypts all data at rest by default; however there exist situations (e.g. meeting compliance requirements) where more control is desired. GKE has support for Cloud KMS to give customers control management of cryptographic key material residing within GKE. Using Customer-managed encryption keys (CMEK) allows customers to manage rotation periods and opens the door to cryptoshredding of data. Note that CMEK can protect both node boot disks and attached disks (PersistentVolumes) used by pods for durable storage. To reiterate, using CMEK should only be considered if a compliance requirement dictates that they need to be used - otherwise they are not worth the overhead that is introduced by having the management of the key delegated to you, the customer.

Audit All the Things

Logging and monitoring are essential functions in detecting indicators of compromise (IoC) within a GKE cluster. Two main services are of interest here - Cloud Logging for the logging functionality and Security Health Analytics (SHA) for the security monitoring function. Also notable is Google Cloud’s container runtime protection, Container Threat Detection, which is built into Security Command Center’s (SCC) premium tier offered at a minimum annual cost of $25,000. Because of this high barrier of entry to many, we choose to keep the discussion at Cloud Logging and SHA.

Cloud Logging

GKE generates many logs including Cloud Audit Logs, System Logs, and Container logs written to STDOUT or STDERR. Leveraging these logs in a security information and event management (SIEM) tool such as Splunk is very useful for finding potential IoCs as they happen. Using Cloud Logging is useful in creating event-oriented workflows that can lead to the automatic remediation of misconfigurations and compromise. For a suite of automated remediations (some GKE specific) that we are actively working on, see Project Lockdown.

Announcing Project Lockdown

Security Health Analytics

Security Health Analytics is a built-in service to SCC which monitors an inventory of assets, including GKE clusters, for vulnerabilities and threats. This is a simple and easy way to monitor GKE security inside of Google Cloud instead of relying on logs that are exported to other destinations. SHA contains an entire group of GKE findings including auto upgrade disabled, cluster logging disabled, and so on.

Everything as Code

The cloud is about moving from hardware to software and, as such, coding should be embraced. Coding out infrastructure helps to greatly increase the security and effectiveness of Google Cloud. Defining GKE clusters with infrastructure as code (IaC) has the added benefit of being evaluated with tests and Policy as Code to check for security misconfigurations before infrastructure is deployed in a cloud environment.

TDD in Your Infrastructure Pipeline

Infrastructure as Code

At ScaleSec, Terraform is our IaC tool of choice. Using the official Google module for GKE offers great benefits including ease of use and opinionated (more secure than default) creation. Using tools to scan infrastructure as code such as Chef Inspec can be useful for validating compliance and secure configuration.

Policy as Code

Kubernetes Admission Controllers are powerful plugins that allow or disallow certain actions to happen on a cluster. Leveraging these via controls such as Gatekeeper and Pod Security Policies are preferred methods of defining rules for creations and updates that happen within a GKE cluster. It’s important to work closely with the compliance team to see what should or should not be allowed into a Kubernetes cluster and then write appropriate rules to prevent violations from occurring. Policy as Code can be seen as building effective guardrails (not roadblocks) to allow rapid development to continue to happen while also enforcing proper security controls on a pre-deployment basis.

Some Final Thoughts

When it comes to security the common themes boil down to people, processes, and technology. Although this guide has been focused on highlighting the technical controls necessary to secure a GKE cluster, having folks on staff who are container security minded and having cloud-native, robust processes such as consistently assessing security posture are also just as important!

The Pillars of Secure Containers