Zero Trust in the Cloud

For decades, protecting your environment has relied heavily on defending an external perimeter. Organizations invest in that defensive perimeter, keep the bad out, and then what lives within the perimeter must be safe. Right? Unfortunately, malicious tactics continue to advance at a pace that even a strong and well managed network perimeter cannot keep up with. The network perimeter and segmentation models we have come to know and rely on are still valuable, but they are not enough to ensure your most valuable resources and data are secure.

With the continuous security and data breaches reported in the news, it is clear that traditional security controls are not getting the job done. It is a regrettably safe assumption that somewhere within the perimeter there is a compromised system which has not yet been found. Enterprises that allow highly mobile systems (BYOD) such as mobile phones, tablets, and other systems, which frequently traverse between managed and unmanaged network spaces, significantly increase the risk on their network. Organizations must deploy systems with a defensive strategy that assumes there are compromised systems within the perimeter.

Zero Trust as a cybersecurity concept takes the classic adage “trust, but verify” and turns it into “never trust, always verify.” If we cannot ensure that every system within our perimeter is trustworthy and uncompromised, we must assume that all systems our resources interact with are untrustworthy until proven otherwise. The stakes are too high for a high value system, with sensitive data, to interact with a malicious system.

This challenge has been recognized on a US national level; in 2021, the White House released an Executive Order which stated (among other important declarations) that the White House expects government organizations and associated partners and supply chain participants to “advance toward Zero Trust Architecture (ZTA).” This advisory from the White House has created a large push for Zero Trust approaches - which has been increasing in popularity over the past decade. As the industry and security product market responds to this endorsement, we should ask: “How do we verify and ensure secure interactions in an untrusted space?”

National Institute of Science and Technology (NIST)

Shortly after the Executive Order was released, the National Institute of Science and Technology (NIST) published a final copy of its Zero Trust Architecture Special Publication (SP) 800-207. Describing Zero Trust as

“Zero trust (ZT) is the term for an evolving set of cybersecurity paradigms that move defenses from static, network-based perimeters to focus on users, assets, and resources.”

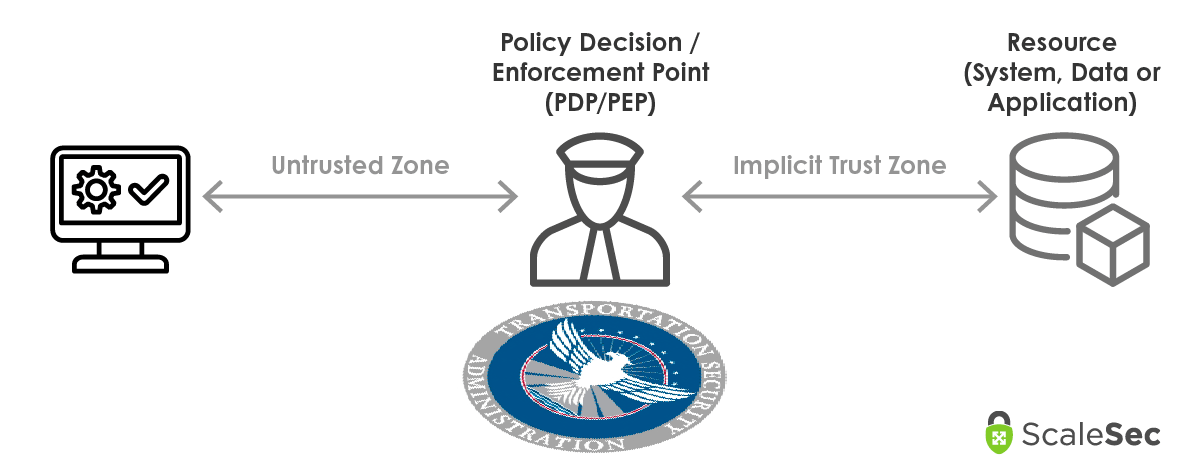

NIST refers to spaces which have not yet adopted a Zero Trust model as an Implicit Trust Zone. As you adopt Zero Trust, the primary first objective is to limit and reduce the presence and scope of Implicit Trust Zones.

Core tenets that NIST outlines within its Zero Trust architecture (NIST ZTA (SP) 800-207 2.1.2 - 2.1.7):

- No network is implicitly considered trusted

- All communication is secured regardless of network location

- Access to individual resources is granted on a per-session basis

- Access to resources is determined by dynamic policy enforcement

- The enterprise monitors and measure the integrity and security posture of all owned and associated assets

- All resource authentication and authorization are dynamic and strictly enforced before access is allowed

- The enterprise collects as much information as possible about the current state of assets, network infrastructure and communications and uses it to improve its security posture

These core tenets call out strategic areas of identity and access management, dynamic policy enforcement to broker connections, and a strong continuous monitoring program.

Zero Trust through Identity Management

In an untrusted space, Identity is the new primary means of control. There are two ways to break down identity types; human-to-system and system-to-system interactions where systems are all resources deployed in a Zero Trust environment. This means that both humans and systems need a means for authentication and authorization.

For human access a centralized enterprise identity management system (such as Okta, Ping, Microsoft AD, Google Workspace, among others) is necessary. It must have compatibility with all systems that human users might need to access. If there are gaps and systems which cannot be integrated with centralized identity management those systems should be acknowledged and accepted by management as a security risk. Access should be granted on a Role basis, and should not be managed for individual users with possible exception cases. The goal is to implement Role Based Access Control (RBAC) by determining the roles that need access to systems, and then determine which human identities should be allowed access to those roles.

Human users should all require Multi-Factor Authentication (MFA) where it is technically possible. This is a critical countermeasure for credential theft and phishing attacks. If the space is not trusted (Zero Trust) we must assume credentials have been compromised. Use of MFA will prevent an attacker with compromised credentials from accessing the system.

For system-to-system access in the Cloud (both AWS and GCP), deployed resources can use service accounts or IAM Roles (depending on the platform). This allows resources to have access and interaction between cloud services managed by identity, instead of by network controls.

Both AWS and GCP also allow for attribute based metadata (tags or labels, depending on the platform), which allow you to provide more detailed means to identify and sort individual identities, cloud resources and data repositories. Attributes on both accessing identities, and the recipient resources should be used for Attribute Based Access Control (ABAC). Use these tags to inform both access logic in your permissions, and Policy Enforcement as we will address in the next section.

Zero Trust through Policy Enforcement

In order to broker connectivity between systems, NIST’s ZTA advises the use of Policy Enforcement Points. Like passing through TSA when taking a flight in a US airport, it has criteria for Policy Decisions to grant access to plane terminals such as:

- Identities (travelers) must carry 2 bags or less

- Identities (travelers) must not have illegal substances with them

- Identities (travelers) must have legal proof of identity (an ID or passport)

- Identities (travelers) must have proof of their destination (a plane ticket)

- Identities (travelers) must have their belongings, including shoes, through scanning and monitoring.

Travelers can have attributes which change the evaluation for their access, such as TSA Pre-Check, Global Entry, or CLEAR. If you have TSA Pre-Check, this attribute attached to your traveler identity means some checks have already occurred and you do not have to put your shoes through the scanner as an example.

Like TSA at the airport, logic for access is evaluated and applied (and adjusted based on attributes) at Policy Enforcement Points (PEPs) between zone-to-zone and system-to-system connectivity to ensure access should be granted.

Policy Decision Point in connectivity and interactions between systems

The Policy Engine (PE) is what evaluates and makes access decisions based on policy logic. NIST describes the PE as

“responsible for the ultimate decision to grant access to a resource for a given subject. The PE uses enterprise policy… as input to a trust algorithm to grant, deny, or revoke access to the resource. The PE is paired with the policy administrator component. The policy engine makes and logs the decision (as approved, or denied), and the policy administrator executes the decision.”

The Policy Administrator (PA) is what acts on the decision made by the Policy Engine. This is the mechanism that

“is responsible for establishing and/or shutting down the communication path between a subject and a resource (via commands to relevant PEPs). It would generate any session-specific authentication and authentication token or credential used by a client to access an enterprise resource. It is closely tied to the PE and relies on its decision to ultimately allow or deny a session. The PA communicates with the PEP when creating the communication path. This communication is done via the control plane.”

A Policy Enforcement Point (PEP) is the implementation of a Policy Engine and Policy Administrator to broker, grant, and revoke access between two entities (human or system). Typically,

“[t]he PEP communicates with the PA to forward requests and/or receive policy updates from the PA.”

Zero Trust through Continuous Monitoring

The state of the environment and policy enforcement transactions should be continuously monitored to identify malicious actors, rogue systems, and inappropriate connections between systems as soon as possible. To accomplish this, a few different capabilities are required:

- A system and data (asset) inventory that is maintained automatically (including tags, labels, and other metadata), including system patching and vulnerability status

- Incident monitoring and alerting in a SIEM to identify active security threat

- Logging for Policy Enforcement transactions

This continuous monitoring of the current state of the environment allows for more flexibility in architecture and environment design. Since we cannot trust what is deployed within the environment, we are focusing on interaction between systems. Zero Trust implementation relies on continuous monitoring to identify and remove suspicious activity, rogue assets, and threats as soon as they appear.

Zero Trust in the Cloud

Both AWS and GCP (and other platforms) offer mechanisms to employ Asset Management, federated identity access, Role and Attribute Based Access Control, as multiple means for Policy Enforcement, and Continuous monitoring. NIST also offers guidance on multi-cloud architectures for Zero Trust as well.

Zero Trust with Amazon Web Services (AWS)

Identity

Amazon makes it clear that they have multiple capabilities which address Zero Trust, but also that network based controls should not be neglected. They are absolutely correct. AWS has Identity centric controls such Federated access, and flexible IAM management where you can grant conditional access to resources based on dynamic tagging.

Policy Enforcement

PE can be done through the identity means above while managing attributes assigned to resources and data which relies on a comprehensive tagging strategy. All resources deployed to your environment should be tagged with at least function, application, data type, sensitivity, and resource owner. This information will allow access to be evaluated and granted (or revoked) based on metadata and attributes of deployed resources.

Continuous Monitoring

AWS has multiple services dedicated to logging, monitoring, and Alerting on security-related activity including AWS CloudTrail, Amazon GuardDuty, AWS Config. These services combined with AWS Security Hub and/or a 3rd party Security Incident and Event Management (SIEM) tool are an excellent foundation to ZTA monitoring.

Zero Trust with Google Cloud Platform (GCP)

BeyondCorp is Google’s answer to Zero Trust. BeyondCorp creates a centralized means for the Zero Trust capabilities required to shift and expand beyond your environment’s reliance on network security controls. Its implementation is heavily human access focused, offering means to grant access to resources based on who the user is, and evaluation of if they are also accessing it from a trusted device. BeyondCorp offers identity and context-aware access control providing capabilities which align to Zero Trust Identity and Policy Enforcement needs.

Separate from BeyondCorp, Google’s Cloud Asset Inventory and Identity-Aware Proxy are just a few ways to further gain visibility into your environment and provide communication brokering and access control within your architecture.

Zero Trust is a large undertaking which will take organizations years to fully achieve. Start by building out your roadmap and then implementing one capability at a time. The risk reduction and improved developer experience will pay off in return. Not only will Zero Trust improve how you work, but for those who operate within or do business with the US Government, it is an absolute necessity.