Game Servers in Google Cloud with Valheim and Kubernetes

TL;DR - https://github.com/ScaleSec/valheim-gke-server

Have you ever wanted to host a game server for friends, family, or coworkers? Have you looked into hosting in the cloud but balked at the cost? Maybe you decided to pay a hosting provider to host your server because setting one up seemed too complicated? I wanted to host a Valheim server for my coworkers but also have fun in the process. ScaleSec prides itself on an employee-first culture. When the latest Valheim patch got released, I asked our founders if they would be willing to host a dedicated server in one of our GCP environments. The answer was an immediate yes.

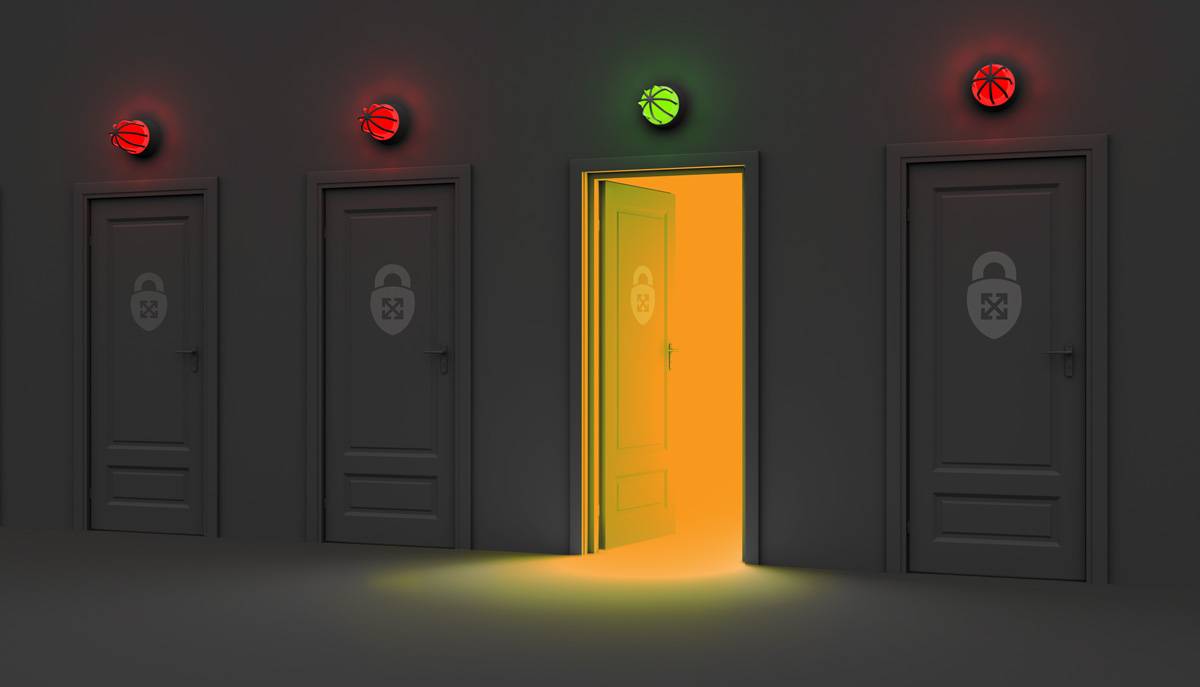

Before getting into specifics, we need to discuss why one would host their own servers instead of using a hosting provider. While paying a hosting provider for a server is quick and simple, the low entry cost comes with tradeoffs: confidentiality, integrity, and availability.

Confidentiality, Integrity, and Availability

Confidentiality means protecting your data from unauthorized access. In our case, this means protecting our server data, configurations, IP addresses, chat logs, and so on. Integrity means protecting data from unauthorized modification or deletion. Availability means having that data in a state where it can be accessed when we need it.

Hosting your own server provides flexibility and peace of mind. You don’t need to worry about the host’s downtime, business issues, bandwidth limitations, or other unknown issues.

Confidentiality, Integrity, and Availability

Putting a Solution Together

Cloud hosting provides many benefits such as scalability, resiliency, and security. My goals were to leverage these benefits and also automate startup, shutdown, and backups. I also wanted to minimize costs and maximize portability. Basically, I didn’t want to spend any time on maintenance, fixes, upgrades, etc. Naturally, Kubernetes on GCP (via GKE) was the only choice.

I engineered a solution to mimic a serverless setup. I wanted to use Kubernetes for portability, simplicity, and to leverage the GCP free tier. I know Kubernetes and “simplicity” don’t usually find themselves in the same sentence, but GKE makes running Kubernetes dead simple. The “serverless” mimicry is a combination of the GCP free tier and Cloud Functions which scale the node pool up and down to completely remove the cost of ownership when the server is not in use. This achieves a “pay for what you use” paradigm, ergo, faux-serverless.

With that said, here is an overview of all the components involved:

Faux-Serverless Setup

At the center of everything is a zonal GKE cluster that runs our game server. Using a zonal cluster reduces our Total Cost of Ownership (TCO) because it falls under the free tier. The GKE node pool size is modulated by two Cloud Functions. The first, Scale Down, is triggered by Cloud Scheduler to check the metrics of the Pod. If it is not in use, it will scale the node pool to zero. It also has some checks in place to make sure it does not scale down too often.

The second, Scale Up, is invoked via HTTP by a user who has the Cloud Functions Invoker role. This is a least-privilege role that allows a user to invoke the function but not do anything else to our cluster. This means my coworkers can bring the server up whenever they want to play without requiring the server administrator to be available or even having an administrator at all.

Modernizing Security: AWS Series - Rightsize Your IAM Policies With The Principle of Least Privilege

I modified the container scripts to copy its backups to Cloud Storage every time it runs the backup job. To make sure we have increased data resilience, Backup is triggered by another Cloud Scheduler job to create a daily archive of your world backup and optionally, copy it to another bucket. This bucket cannot be accessed by any other principal which provides more assurance that you will have an intact backup should anything go wrong. As the military saying goes, “Two is one and one is none”.

All functions are written in Go and all resources are written in Terraform. The container is deployed via Helm and the Scale Up function is triggered by an included script. Like I said, I wanted to spend time having fun and not dealing with any administrative burdens.

And finally, let’s talk about the security of this offering. All resources use unique Service Accounts with least-privilege roles and assignments. The GKE Cluster uses Workload Identity to map Kubernetes identities to GCP Service Accounts and also Master Authorized Networks to restrict which IPs can connect to the Kubernetes control plane.

Total Cost of Ownership

The TCO here is very low. The GKE cluster is free. A single e2-standard-2 node is enough to support at least six concurrent players, probably more, even with modifications that increase server load.

Plugging in very overestimated values into the GCP Pricing Calculator including 4 hours x 7 days uptime, 20GiB of persistent SSD storage, 5 GiB of Cloud Storage, and then 6 GiB-seconds and 10 GHz-seconds for the Cloud Functions gives us an estimate of $11.82 per month.

In my research, I have found this to be comparable to leading hosting providers with the added bonus of additional control and security, as discussed at the beginning of this blog.

Conclusion

Running your own game server in the cloud doesn’t need to be expensive or administratively arduous. With the right engineering, running a game server in the Cloud is just as cheap as a managed hosting provider and comes with the added bonus of real security.

All code can be found in our GitHub repository here. Please feel free to star, PR, or create issues with suggestions, problems, or questions.

ScaleSec Valheim GKE Server Repo on GitHub

In Part 2, I will follow up with ways to run other popular games in GCP (Minecraft, Terraria, and others) and also add some improvements to this code including:

- Generalizing all code to support any game

- Backing up to other clouds and storage services

- Adding container-level support for preemptible instances

- Various UX improvements such as notifying the Scale Up invoking user when the server is ready to be connected without granting access to the cluster, possibly via a user-configurable option such as Discord, SMS, etc.